An AI system is currently analyzing a chest scan somewhere in a hospital radiology department. Something is being flagged. Since it was first approved, it has learned to identify over tens of thousands of images—a nodule, perhaps, or an anomaly. Is it the same algorithm that passed FDA review? This is the question that should cause regulators to feel uneasy. And if it wasn’t, how would anyone know?

One of the more genuinely challenging regulatory issues in contemporary medicine is rooted in that tension. The FDA was created over many years to assess things that don’t change. a replacement hip. a glucose monitor. A surgical stapler. The device that is put into use is the one that was evaluated after you test and approve it. That is not how adaptive AI operates. Machine learning algorithms are designed to get better over time; they can take in new information, modify their pattern recognition, and produce new results. Six months from now, the same input might yield a different outcome than it did at launch. It’s not a bug. That’s the whole idea. Additionally, it raises a regulatory issue that the FDA has been publicly debating for a number of years.

Over 500 AI-enabled medical devices had been approved by the agency as of early 2025. That figure represents real momentum, including algorithms that identify early signs of sepsis in emergency rooms, systems that identify potential stroke on CT scans, and imaging software that detects diabetic retinopathy. The clinical potential is genuine and, in certain situations, has already been shown to be beneficial. However, while the tools are operating, the framework that will be used to monitor them after they are deployed is still being put together.

| Topic Overview | |

|---|---|

| Subject | FDA regulation of adaptive AI/ML-enabled medical devices |

| Regulatory Body | U.S. Food and Drug Administration (FDA) — Center for Devices and Radiological Health (CDRH) |

| Key Framework | Total Product Lifecycle (TPLC) approach + Predetermined Change Control Plans (PCCPs) |

| PCCPs Finalized | December 2024 |

| AI-Enabled Devices Authorized | 500+ as of 2025 |

| Core Problem | Traditional regulation assumes static devices; adaptive AI continuously changes post-approval |

| Key Risks | Performance drift, algorithmic bias, “black box” opacity, automation bias in clinical settings |

| Notable AI Devices | IDx-DR (diabetic retinopathy), ContaCT (stroke detection), OsteoDetect (fracture detection) |

| Key Research | PMC/NIH — “The Illusion of Safety” (Abulibdeh et al., 2025); Pew Charitable Trusts AI regulation analysis |

| Reference Links | FDA — Artificial Intelligence in Software as a Medical Device / Pew Charitable Trusts — How FDA Regulates AI in Medical Products |

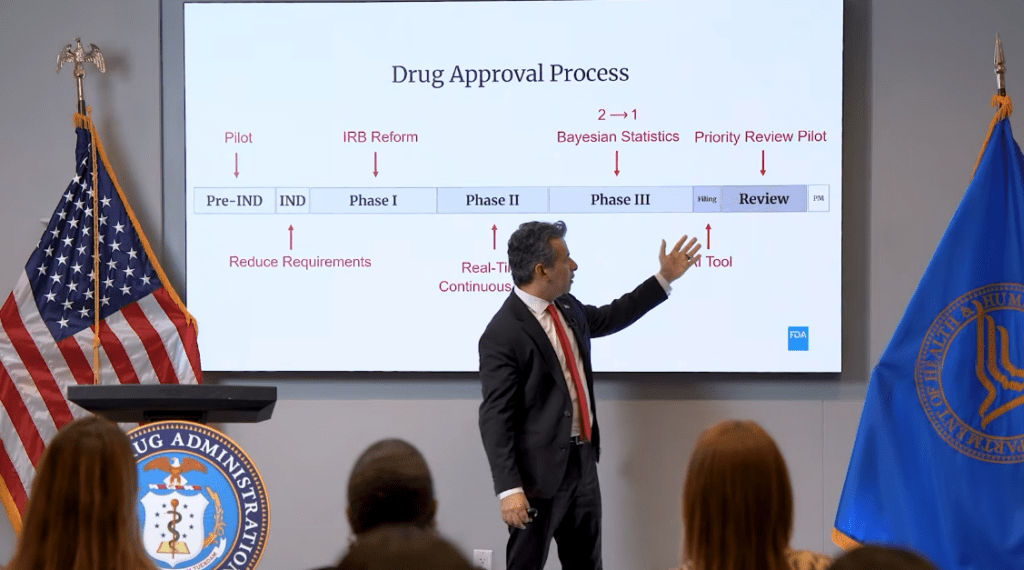

The Predetermined Change Control Plan, or PCCP, is the FDA’s main response to this problem. It is a document that manufacturers submit with their initial application that outlines potential algorithmic changes and how they will be validated. After years of drafts and public feedback, the PCCP guidelines were finalized in December 2024. Theoretically, it provides the agency with something akin to a map of expected evolution: this is what the system is expected to learn, this is how we will test it while it learns, and this is what we will report. Every time the algorithm retrains, developers who adhere to the parameters of their approved plan are exempt from having to submit a new application. That is a significant compromise. The regulatory process would be functionally unfeasible if each incremental change in a continuously learning system required a complete premarket review.

However, researchers and impartial observers have been raising some concerns about the limitations of the PCCP framework. The FDA’s current strategy is creating a “illusion of safety,” according to a 2025 paper published in PLOS Digital Health by researchers, including scientists from MIT and Harvard-affiliated institutions. While not discounting the agency’s efforts, the authors contend that post-market monitoring is still inconsistent and underdeveloped in important ways. The infrastructure and resources needed for continuous real-time monitoring of adaptive algorithms are not yet fully incorporated into the regulatory process. As a result, manufacturers are primarily responsible for monitoring the performance of their deployed algorithms, and regulators have little insight into whether or not this monitoring is thorough.

Additionally, there is the “black box” problem, which is more difficult to solve and doesn’t get any easier as models get more powerful. Many deep learning systems, such as those that model disease risk from electronic health records or analyze medical images, arrive at their conclusions through computational processes that are difficult for users to understand. At the time of approval, that opacity existed. As the algorithm keeps learning, it doesn’t get smaller. The inability to trace the reasoning is a real issue for a clinician trying to determine how much weight to give an AI recommendation or for a regulator trying to determine whether a performance shift represents normal improvement or dangerous drift. The FDA has released transparency guidelines, and the 2024 final guidance on submissions of AI devices places a strong emphasis on demographic representativeness and bias mitigation. However, transparent systems and transparency guidelines are not the same.

The disparity between the rate of adoption of these tools and the rate of development of the oversight frameworks is difficult to ignore. According to a 2020 analysis of datasets used to train diagnostic AI, only three U.S. states provided data for about 70% of the studies. When an algorithm is used in clinical settings that differ significantly in terms of geography or demographics, it may perform significantly worse than what its initial validation indicated. Deteriorated performance might go unnoticed until something goes wrong if post-market monitoring isn’t picking that up consistently.

The FDA has created what may be the world’s most advanced regulatory framework for adaptive AI in medical devices. The PCCP mechanism, the Good Machine Learning Practice guidelines, and the Total Product Lifecycle approach are all genuine attempts to establish governance for a truly innovative class of tool. There appears to be some confidence in the FDA’s direction as other jurisdictions, such as Health Canada and the UK’s MHRA, are attempting to align their frameworks with the FDA’s methodology. When the monitoring infrastructure catches up, the current framework might prove sufficient. However, that infrastructure is still lacking, and the devices are already in use in clinics and hospitals where they are influencing or making important decisions regarding patient care.

There is a real difference between where the oversight is and where it should be. It manifests when a radiologist follows a flagged finding without being informed that the algorithm generating it has undergone uncommunicated changes. It manifests when a hospital system applies a clinical decision support tool that was created and verified in a large academic medical center to a small rural facility with a different patient population and different standards of care. It manifests when someone inquires as to whether a bias found in an AI’s outputs was present in the initial, approved version or developed later, and no one is able to confidently respond to that question. The FDA is taking these issues very seriously. The gadgets don’t wait for that task to be completed.